How to Talk to Bots: 8 Technical SEO tips

There are a whole lot of technical SEO (search engine optimization) tips, tricks, and strategies you can implement. However, it’s not always easy to know where to get started — or when to stop (there’s always a point of diminishing returns). This is particularly true if SEO isn’t your full-time gig, which is true for many developers, content creators, and even marketers.

Here, to help solve that problem, we’ll take a look at the basics of technical SEO and explore some tips you can use without being an SEO wizard.

What does that mean for you? If you’re a busy site administrator looking for a technical SEO crash course and technical SEO tips you can implement today, you’re in the right place.

What is Technical SEO?

Technical SEO is a subdomain of SEO that deals with optimizing how search engines find, understand, and index your website.

Or, as I like to put it: technical SEO is how your site talks to Googlebot.

?Note: For simplicity, I’ll focus on Google’s search engine here because they’re still the 800 lbs gorilla of search engines. But the same principles generally apply to other engines like DuckDuckGo, Bing, and Yandex.

Why does talking to these bots matter? Your pages do — or don’t — get listed on a search results page based on what bots like Googlebot “think” about it. So, if you want your site to rank for relevant search terms, you need to give the bots the right information.

How Search Engines Work

Understanding how to talk to Googlebot effectively begins with understanding how the search process works. Google provides plenty of information on the process and ahrefs has a solid beginners guide, but neither is exactly light reading. Here’s the “in a nutshell” version:

- The bots learn your URL exists- The bots learn a page exists in a variety of ways including backlinks, manual submissions, and sitemaps. Once your page is found, it’s put in a queue to be crawled.

- The crawlers crawl, process, and render your page- When a bot crawls your webpage, it does a number of things to “learn” what the page is about and how it works. This involves downloading the page, rendering it to “see” what a user would see, and using structured data to understand content.

- Your site gets indexed for use in search results- After the bots “learn” about your page, it gets added to an index. This index is referenced when a user conducts a search, so you need to be in it if you want to be on a results page!

- When a user conducts a search, your page is (or isn’t) returned based on what the bots know- Google’s ranking algorithm is proprietary so we don’t know exactly how pages get ranked. However, we do know things like page speed, mobile-friendliness, structured data, and headers all matter. Based on what it “learned” in the previous steps and what it thinks the user wants to know, the bot returns the results it thinks are relevant.

8 Practical Technical SEO Tips

Alright, now you know enough to be dangerous when it comes to technical SEO. Let’s jump into those tips!

Tip 1: Check How Your Site is Indexed Today

You need to start somewhere, and for technical SEO checking how your site is currently indexed is a great place. There are two simple ways to do this:

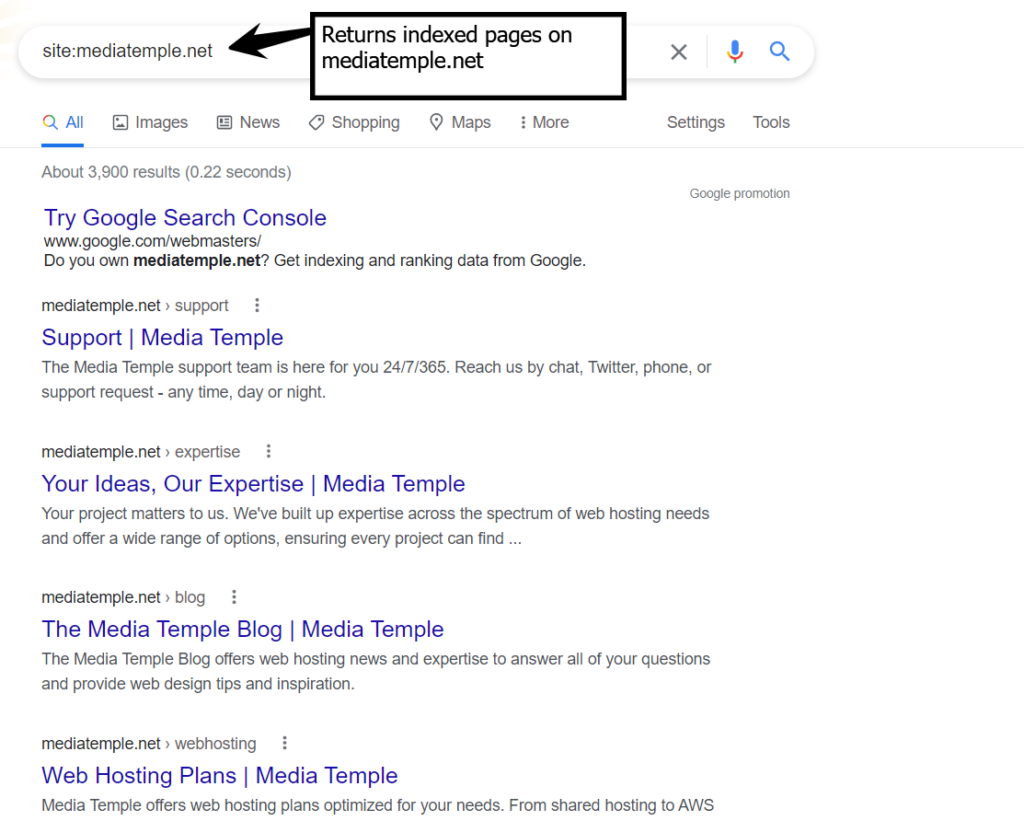

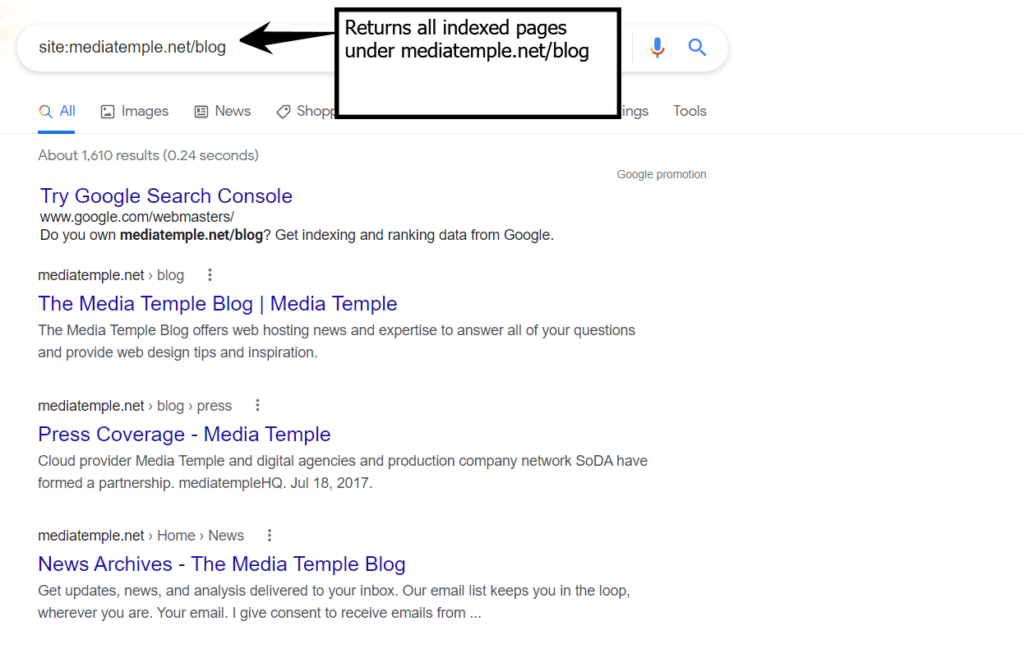

- Use the Google search “site:” operator

As a general rule, Google Search Operators are a great way to refine your search results. In the world of technical SEO, a search using the “site:” operator lets you return results for a specific website, subdomain, or URL path. For example, a Google search for site:mediatemple.net will return all indexed pages on mediatemple.net. Suppose we only wanted to find indexed pages in the Media Temple blog. Since the URL path for the blog is mediatemple.net/blog, a site:mediatemple.net/blog search will do the trick.

Suppose we only wanted to find indexed pages in the Media Temple blog. Since the URL path for the blog is mediatemple.net/blog, a site:mediatemple.net/blog search will do the trick.

- Use the Google Search Console

If you’re not using Google Search Console, you should probably start. It allows you to capture a lot of information related to your website’s search rankings. For example, you can see all the pages on your site Google knows about and review a list of potential issues you can resolve for some quick technical SEO wins.

At a high level, be on the lookout for problems such as duplicate content, no search results, results you do NOT want to appear in searches, or fewer results than expected. We’ll touch on how to address many of those problems in some of our other tips. The short version is: they can often be resolved through some combination of sitemaps, robots.txt files, or changes in the Google Search Console.

Tip 2: Make Sure Your Pages Load Fast

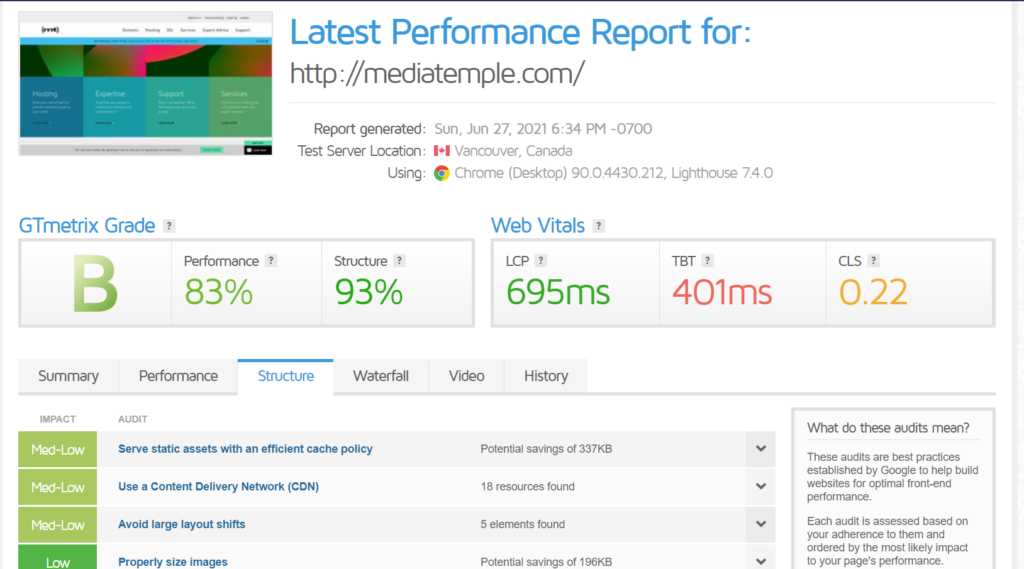

When it comes to search rankings, the bots really care about speed (especially after the latest algorithm update). While there is no hard rule on how “fast” is “fast enough”, most recommendations are for 2-3 second page load times and the general consensus is “the faster, the better”.

Of course, there is no one-size-fits-all answer to how you can make your website load faster, but here are some resources to help you get started:

- GTmetrix is a good tool for baselining a webpage’s performance, and it provides some actionable recommendations.

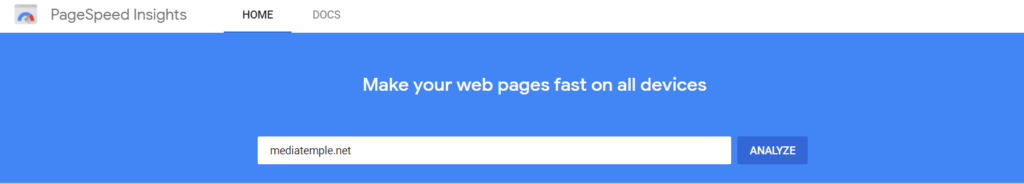

- Google Pagespeed Insights is another solid tool for understanding how your web pages perform, and the results are from the horse’s mouth.

- For a deeper dive into optimizing your site performance for improved search rankings, Nathan Reimnitz has you covered.

Tip 3: Get Your Headers Right

Generally, headers are a great way to break up content for your readers. A “Header 1” (H1) is the main idea, an H2 is a subtopic, an H3 is a subtopic of that subtopic, and so on.

For technical SEO, headers are represented by specific HTML tags. For example, an H1 of “Pepper and egg sandwiches are the best sandwiches ever” would look like this in HTML:

<h1>Pepper and egg sandwiches are the best sandwiches ever</h1>

A lot of the content out there around SEO would have you believe you really need to think hard about your header strategy for technical SEO. Fortunately, as John Muller indicated in a Google Webmaster Central hangout (time stamped), you probably just need to use headers to contextualize sections of your content so the bots know what they’re reading. The good news is: that’s basically the same thing you should be doing anyway to make your content readable for humans.

So, what should you do to get your headers right?

- Only use 1 H1 per page

- Use headers to break up your content for human readers

- Make your headers descriptive enough to contextualize content

For WordPress sites, the Yoast plugin can be a useful tool to analyze the quality of your headers and other aspects of a page’s SEO. For non-WordPress sites, they have a free online content analysis tool too.

Tip 4: Understand How to Use Tags

Header tags are just one type of HTML tag. There are plenty more you can use to improve your technical SEO. Here are some of the most important, and the specific problems they can help you solve.

- Use

rel=canonicalto avoid issues with duplicate content. Duplicate content is bad for SEO. However, it can be a common occurrence for content to appear in multiple places on your site. For example, if your site serves the same content at www.<yoursite>.com and <yoursite>.com (without the www.), you have duplicate content. Using therel=canonicaltag helps tell bots which page they should refer users to. It’s straightforward in some cases, but knowing when to use canonical tags vs 301 redirects can get tricky, so check out this Moz article if you need more guidance.

- Use a noindex meta tag to prevent a page from being indexed. Sometimes you don’t want pages to be indexed (login screens, thank you pages, logs, etc). In that case, a noindex meta tag can help.

- Use alt tags to improve SEO for images. Images are an important part of SEO, but how can you tell the bots what your images are about? Alt tags. Given their purpose, your alt tags should literally describe your image, not supplement it in some way.

Tip 5: Use your robots.txt

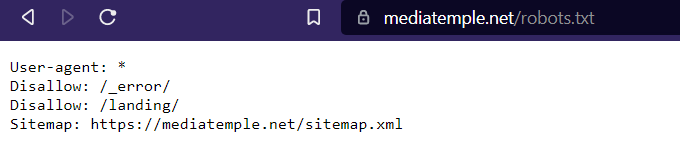

The robots.txt file on a website gives instructions to web crawlers (although not all bots respect the instructions). A robots.txt file should exist at yoursite/robots.txt. For example, ours is at https://mediatemple.net/robots.txt

For WordPress users, there is a default robots.txt file in most cases. You can also use plugins like Yoast to create/edit your robots.txt or manually upload one via FTP.

For WordPress users, there is a default robots.txt file in most cases. You can also use plugins like Yoast to create/edit your robots.txt or manually upload one via FTP.

There are a variety of things you can do in a robots.txt file including:

- Set up 301 redirects to avoid losing traffic when you migrate a site

- Disallow specific URL paths from being indexed

- Point bots to a sitemap for your website

If you’re just getting started with technical SEO, at least make sure you are not disallowing indexing of the pages you want indexed and are pointing the bots to your sitemap. Robots.txt is a plaintext file and the directives are fairly straightforward, but if you need a crash course this CloudFlare article can help.

Tip 6: Use Site Maps to Your Advantage

Sitemaps are a great way to give bots an idea of how your site is structured and help nudge them in the right direction when they crawl your site. In addition to pointing to them in your robots.txt file, you can directly submit them to the Google Search Console. This helps you make sure the bots have the most up-to-date information on your site. For WordPress sites, plugins like Yoast can make creating and managing sitemaps simpler.

Tip 7: Use HTTPS Everywhere

This one is easy: use SSL (i.e. HTTPS connections) for your website. Why? HTTPS helps keep your visitors’ data private, improves security, and has been a Google search ranking signal since 2014.

Tip 8: Optimize for Mobile

Mobile-first indexing means Google mostly uses the mobile version of a website for ranking and indexing purposes. In July 2019, Google made mobile-first indexing the default for all new websites. That means that how your site performs on mobile can have a huge impact on your rankings.

Therefore, you should make sure you optimize your site for performance, readability, and useability on mobile. Exactly how you do this will vary depending on how you manage your website. For example, AMP (Accelerated Mobile Pages) is a framework originally designed by Google that aims to help pages load faster on mobile. For WordPress sites, there are many themes and plugins that can help make optimizing for mobile simple.

Final Thoughts: Offloading SEO Complexity

There is a lot to getting SEO right, and that’s part of the reason why there are so many dedicated SEO professionals. While it is certainly possible to learn enough technical SEO to do it yourself, sometimes offloading the complexity makes sense.

In some cases, that means hiring an SEO professional to do the work. In other cases, a managed WordPress solution can help minimize your workload. For example, Media Temple’s Managed WordPress plans include:

- SSL certificates

- CDN to improve page speed

- SEO optimizer

- 24/7 expert support